Most AI products die after the demo

Not because the technology failed — because the founder never confirmed the problem was urgent enough to warrant changing how people work.

Building has never been cheaper. A working prototype that would have taken a team three months can be ready by Friday. So founders build. They show demos. They get impressive reactions. Then they watch adoption stall — because impressive and essential are different things, and they never did the work to find out which one they had.

I've been living at the intersection of technology and design for my whole career. At Stackpoint, we build 0→1 AI ventures inside a studio model, which means we've run this process across industries and use cases repeatedly. I helped build all nine ventures from zero — four of them vertical AI companies, with three more in motion right now. As an active adviser and angel investor in AI startups, I see the same patterns play out across even more contexts — what actually fails early, and what creates conditions for real adoption.

What follows is the playbook we actually use at Stackpoint.

Step 1: Start with the problem, not the possibility

In the current AI moment, most product conversations start solution-shaped. "We can use AI to automate X." "What if AI could do Y?" It's a natural instinct when capability is moving this fast — but it's the wrong place to build from.

The question that actually matters is: how much does this problem cost the customer right now, in time, money, or risk? If you can't answer that concretely, you're not ready to build.

The AI products that get adopted aren't solving interesting problems. They're solving urgent ones. An interesting problem gets nodded at in meetings. An urgent problem — a top-three issue, something your customer is actively losing money on (financial signal) or cobbling together their own fixes (behavioral signal) for every day — creates natural pull.

Urgency gets them to the table. Importance keeps them there.

Customers will divert budget for a problem that's costing them now. They'll champion it internally when solving it is tied to something that genuinely matters to their success. They'll forgive early rough edges because the cost of not solving this is higher than the cost of imperfect software.

Before committing to a use case, run it through three questions:

Is this problem in their top three? Not a nice-to-have, not something that came up once in a conversation — a recurring, costly pain they're actively trying to solve. If they're not already spending money on it or cobbling together their own fixes, it probably isn't urgent enough.

Would they notice immediately if it disappeared? A useful test: if your product stopped working tomorrow, would your customer feel it by the end of the day? If the honest answer is "probably not for a while," the problem isn't urgent enough.

Is the friction measurable in business terms? Time lost, revenue at risk, compliance exposure, cost overruns. If you can't tie the problem to a number the customer's business cares about, you'll struggle to build a value case that justifies adoption — or price.

The trap that AI specifically creates here is the temptation to find a problem that fits what the technology can do, rather than starting from what the customer desperately needs. That's how you end up with technically impressive products solving problems that aren't painful enough to drive behavior change.

There's a subtler trap too, one that's easy to miss: AI tools are remarkably good at making bad ideas sound reasonable. Describe your concept to an AI assistant and ask it to pressure-test the idea — you'll get a structured, thoughtful-sounding response that acknowledges a few risks and affirms the core opportunity. It feels like validation. It isn't. AI reflects your assumptions back at you with more polish. It doesn't have the standing to tell you the problem isn't actually urgent, because it has never watched a land developer sweat over a site acquisition or a construction manager scramble to close a cost overrun. That kind of honest signal only comes from real people with real stakes in the problem. Used uncritically, AI becomes an echo chamber for your own conviction — and a fast one at that. The antidote is the same as it's always been: get in front of customers, ask uncomfortable questions, and let their behavior tell you more than their words.

Step 2: Pick the right wedge

Once you've found a real, urgent problem, the next mistake is trying to solve it all at once.

The wedge is the smallest slice of that problem where you can deliver complete, measurable value to the customer — end to end, from input to outcome. Not a feature. Not a piece of the workflow. A full solution to a specific, contained version of the problem.

Picking the wedge feels uncomfortable. It always feels too small. Like you're leaving other opportunities on the table. Like a competitor could copy it easily. But the wedge isn't your moat — it's your proof point. Its job is to create a win that the customer can feel and measure, fast enough that they trust you with more.

The best vertical AI wedges share a few characteristics.

They play to what AI is already reliably good at: processing high volumes of information, surfacing patterns in data, drafting outputs that would otherwise take a human hours.

They start adjacent to the most complex or mission-critical decision in the workflow, not from it. It focuses on delivering value that removes friction around a decision rather than making the decision itself.

They fit into the customer's existing workflow without requiring significant behavior change. In vertical B2B markets — construction, real estate, finance — buyers are time-stretched and tech-skeptical. A product that works brilliantly but demands a new habit to access will lose to a simpler product that fits naturally into what the customer already does. Meet them where they are first. Expand from there.

Narrow, deep, and workflow-native. That's the wedge.

Step 3: Get inside the workflow before you build

This is the step most technical founders compress, and it's the one that costs the most later.

Discovery in vertical B2B isn't desk research. It's not surveys or analyst reports. It's watching someone do their job — understanding the tools they switch between, the steps they hate, the information they're missing when they make a decision, the workarounds they've built because no existing tool solves it cleanly. That knowledge doesn't come from anywhere other than direct, sustained access to practitioners.

The process we use: identify the specific role and workflow you're targeting, map it step by step, then find the moments where the friction is highest, and the current tools are most obviously inadequate. Collect the actual artifacts of the work — documents, spreadsheets, checklists, process flows. Understand what "done well" looks like and what it currently costs to get there.

What makes this hard in vertical markets is that access is everything. Breaking into industries like construction or commercial real estate as an outsider is genuinely difficult. The practitioners who would make the best design partners are busy, skeptical of vendors, and protective of how they work. Getting into those conversations — and getting the depth of access that produces real insight — is a relationship sport.

This is one of the places where Stackpoint's studio model creates real leverage. Many of the ventures we build don't start with a founder pitching us a solution — they start with an operator, buyer, or industry advisor in our network flagging a pain point they're living with. That's a richer starting point than a cold thesis. We run our own discovery process to validate the problem — talking to more people across the network, pressure-testing the urgency and frequency, checking whether it's one person's frustration or a pattern. When the signal holds, we go deeper with onsite visits. An onsite shows you what they actually do — where they slow down, what they've stopped noticing, where they reach for a workaround. That quality of insight can't be replicated remotely, and it's a standard we push hard on at Stackpoint. That network-rooted, discovery-validated approach means we can identify and confirm the right problem, and get a founding team in front of the right design partners, significantly faster than starting from scratch. That compression — from initial signal to validated wedge — is often the difference between finding product-market fit in months versus years.

Step 4: Design for the right level of human involvement

This is where most AI product thinking could go wrong — and where the most consequential product decisions actually live.

The instinct is to default to human-in-the-loop because it feels safer. But that's not the right frame. The real design challenge is knowing when human judgment is essential — and when it actually introduces cost, inconsistency, and delay without adding value. Both errors kill adoption. Getting this decision right starts with understanding the nature of the work you're automating.

Two dimensions matter most. First: how much does the quality of the outcome depend on professional judgment, contextual nuance, and tolerance for ambiguity? Second: how consistent, rule-bound, and high-volume is the task? These two dimensions pull in opposite directions — and they determine where on the spectrum between full human control and full automation your product should sit.

Most workflows fall somewhere between two poles: craft work and clockwork.

Craft work (high-skilled, high-variance, judgment-based) — where experienced professionals make judgment calls under uncertainty, weighing trade-offs that shift with every situation — demands that humans stay meaningfully in the loop. Not as a safety net, but as an active participant in the output. The AI's job here is to do the heavy processing and surface the right inputs; the human's job is to apply the judgment that the AI genuinely can't replicate. Remove the human too early and you don't just lose trust — you lose accuracy on the decisions that matter most.

Clockwork (high-volume, pattern-dense, process-based) — where the value comes from speed and reliability rather than interpretation — often demands the opposite. Human involvement introduces variability, bottlenecks, and costs without improving outcomes. In these workflows, partial automation is frequently worse than full automation: it creates handoff friction, diffuses accountability, and undermines the speed advantage that made AI attractive in the first place.

When human-in-the-loop is the answer: craft work

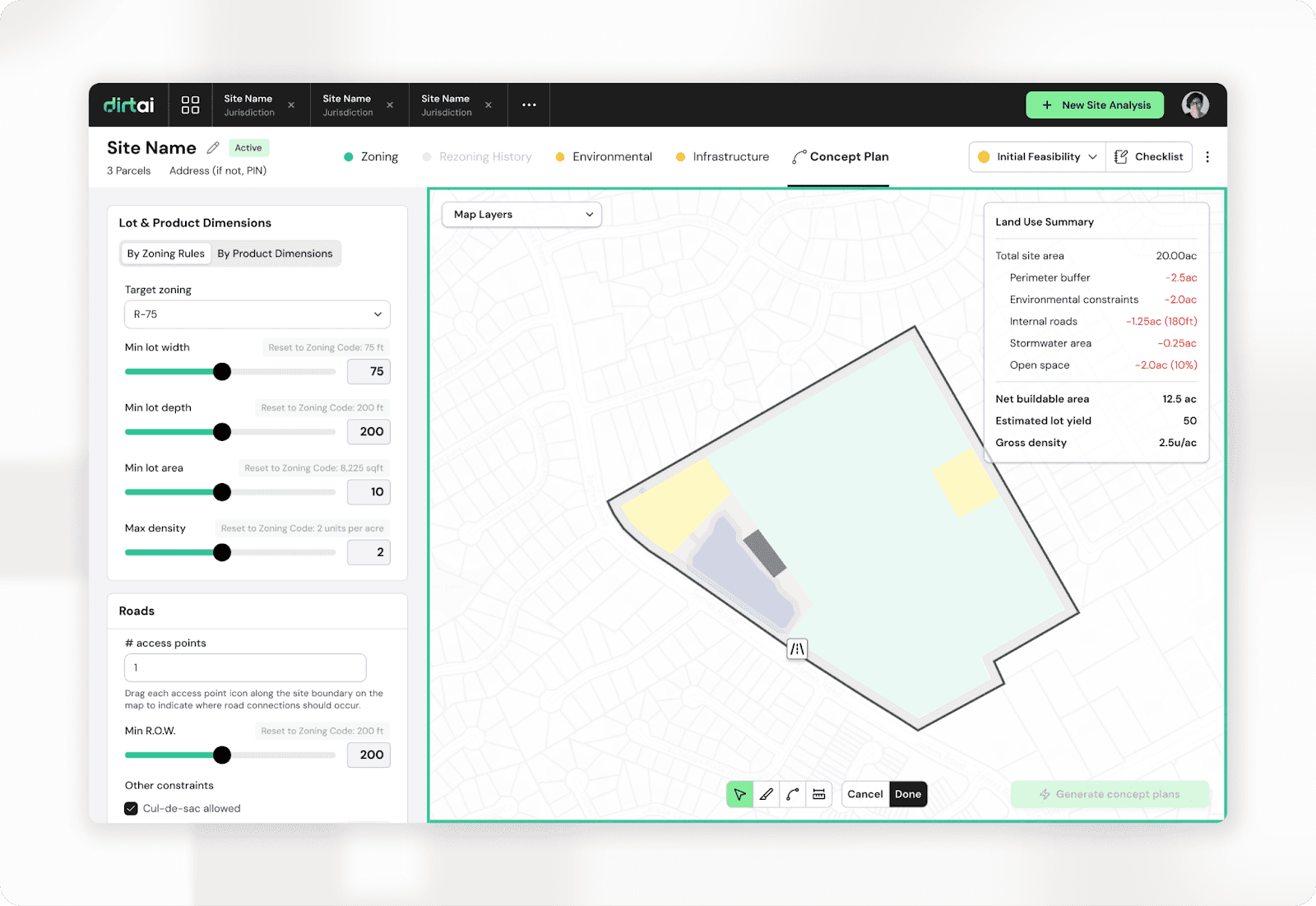

The Dirt team, building a land feasibility tool for real estate developers, encountered this firsthand. The AI was genuinely capable — it could ingest site data, run financial calculations, and generate unit yield in a fraction of the time an analyst would need. Early demos impressed. But early users weren't acting on the outputs.

The model wasn't the problem. The workflow was.

Developers making land acquisition decisions are managing significant financial risk. They don't just want a recommendation. They need to stress-test it — to see the assumptions behind it, challenge them, and run their own scenarios before staking a deal on what the AI produced. The missing piece wasn't more accuracy. It was a sandbox environment where the developer's judgment could actively engage with the AI's work, rather than just receive it. Once the product was redesigned around that workflow — with scenario controls, constraint overrides, and full visibility into the model's inputs — adoption followed. The breakthrough wasn't a model improvement. It was understanding how a land developer actually makes decisions under uncertainty.

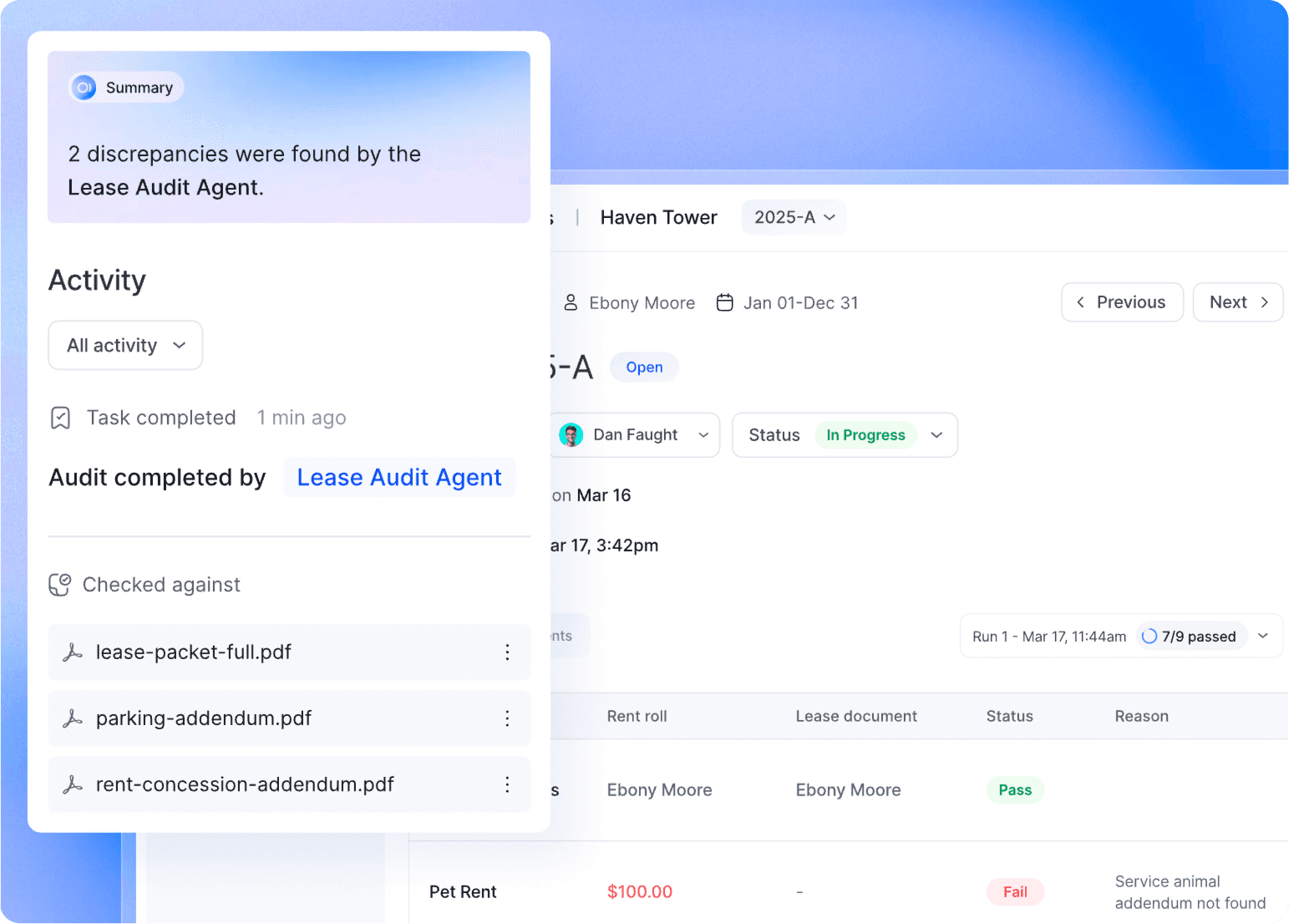

When full automation is the answer: clockwork

The Surface team, building a lease audit tool for multifamily property operations, faced the opposite problem. Early versions flagged exceptions for human review — a design that felt responsible but turned out to be the adoption blocker. Lease auditing is high-volume, rule-bound work: leases either comply with their terms or they don't. Human review didn't improve accuracy. It slowed the product to a pace that eliminated its core value proposition — the ability to audit an entire portfolio in minutes rather than weeks. Adoption didn't take off until the product handled the end-to-end workflow autonomously. The humans who'd been reviewing outputs were freed to act on the findings, not produce them.

The decision that matters

These two examples aren't exceptions. They're illustrations of a design decision every AI product team needs to make explicitly — not by default, not by instinct, and not by assuming more human involvement is always safer.

Ask two questions before you design the human's role: Is this craft work or clockwork? And does the volume and consistency of this task make human involvement a bottleneck rather than a safeguard? The answers will point you toward the right design — and getting this right early is often the difference between a product that earns trust and one that stalls waiting for it.

One note that applies regardless of where your workflow sits on that spectrum: at the very beginning, with your earliest users, optimize for trust before you optimize for automation. Even in clockwork workflows where full automation is the eventual destination, early users need to see the AI's outputs, validate them against their own knowledge, and arrive at their own conviction that the system works. That trust-building phase isn't wasted time — it's what converts early adopters into champions who will defend the product internally and pull it through the organization. Skip it, and you may ship a fully automated product that nobody believes in enough to rely on.

Step 5: Measure value in the customer's language

The last step most teams underinvest in — and the one that determines whether you can prove you've succeeded, expand the relationship, and build the case for the next customer.

AI products are easy to measure in technical terms. Model accuracy. Latency. Throughput. Those metrics matter internally, but they tell your customer nothing about whether their problem is getting solved. Define from the beginning how you'll track success in business terms.

What specific business metric does this product move? Not just proxy metrics — the real ones. Not "reduction in time to close a deal" but what that translates to: more deals closed per quarter, higher revenue per rep, lower cost of sale. Not "hours saved per project" but what those hours recover: faster project throughput, lower cost-to-deliver, margin recaptured on every job. The discipline is always the same — follow the proxy until it hits a dollar sign. That's the metric that moves budgets and renews contracts.

Then make it visible. Show the customer the value they're getting, not just the outputs the AI is producing. A summary of findings is a feature. A dashboard showing "you've saved 23 hours this month and reduced rework costs by $14,000" is a reason to renew.

This also shapes how you talk about the product externally. "We use AI to analyze your project documents" is a capability statement. "We help construction managers catch cost overruns before they happen, typically saving 8–12% on project costs" is a value statement. Customers buy the second one.

Define your value metric before you write your first line of code. It will clarify the product decisions, sharpen the scope, and give you something real to optimize toward.

The playbook isn't new — AI just changes the speed

Everything in this process — finding urgent problems, narrowing the scope, building close to the customer, designing for trust, measuring outcomes — is how good products have always been built. None of it is specific to AI.

What AI changes is the speed at which you can run the playbook. You can prototype faster, test concepts with users earlier, and make early versions feel real before committing to production infrastructure. Those are genuine advantages, and founders who use them well are significantly compressing the 0→1 timeline.

What AI doesn't change is what you're running toward. The customer still needs to feel the pain urgently enough to adopt something new. The product still has to fit into how they already work. The AI still has to earn trust before people rely on it for decisions that matter. Those fundamentals haven't moved.

The founders who win with AI aren't the ones with access to the best models. They're the ones who are most disciplined about discovery — and who understand that the playbook exists to serve the customer, not to show off what the technology can do.

Working on brainstorming an AI solution right now? Pause a second and take a moment to check out the Solution Discovery Scorecard →. This is the exact criteria Stackpoint uses to pressure-test opportunities to determine our best strategic bet and the optimal starting point.